When AI writes almost all code, what happens to software engineering?

👋 Hi, this is Gergely with a subscriber-only issue of the Pragmatic Engineer Newsletter. In every issue, I cover challenges at Big Tech and startups through the lens of engineering managers and senior engineers. If you’ve been forwarded this email, you can subscribe here. When AI writes almost all code, what happens to software engineering?No longer a hypothetical question, this is a mega-trend set to hit the tech industryThis winter break was an opportunity for devs to step back from day-to-day work and play around with side projects – including using AI agents to juice up those half-baked or incomplete ideas. At least, that’s what I did with a few features I’d meant to build for months, but didn’t get around to during 2025: related to self-service group subscriptions for larger companies, and my custom-built admin panel for The Pragmatic Engineer. Unexpectedly, LLMs like Opus 4.5 and GPT 5.2 did amazing jobs on the mid-sized tasks I assigned them: I ended up pushing a few hundred lines of code to production simply by prompting the LLM, reviewing the output, making sure the tests passed, then prompting it a bit more. To add to the magical feeling, I then managed to build production software on my phone: I set up Claude Code for Web by connecting it to my GitHub, which let me instruct the Claude mobile app to make changes to my code and to add/run tests. Claude duly created PRs that triggered GitHub actions (which ran the tests Claude couldn’t) and I found myself reviewing and merging PRs with new functionality purely from my mobile device while travelling. Admittedly, it was low-risk work and all the business logic was covered by automated tests, but I hadn’t previously felt the thrill of “creating” code and pushing it to prod from my phone. This experience, also shared by many others, suggests to me that a step change is underway in software engineering tooling. In this article – the first of 2026 for this publication – we explore where we are, and what a monumental change like AI writing the lion’s share of code could mean for us developers. Today, we cover:

1. Latest models create “a-ha” momentsOver the past few weeks, some experienced software engineers have shared personal “a-ha” moments about how AI tooling has become good enough to use for generating most of the code they write. Jaana Dogan, principal engineer at Google, was very impressed by how far Claude Code has come:

Thorsten Ball, software engineer at Amp reflected (emphasis mine):

Malte Ubl, CTO at Vercel:

Longtime readers may recall Malte from his reflections on 20 years in software engineering, including 11 at Google. One could claim that the voices above have an interest in this topic given they work at companies selling AI dev tools. But engineers with no pull towards any vendor also made similar observations: David Heinemeier Hansson (DHH), creator of Ruby on Rails, described how his stance on AI has flipped due to the improved models:

Adam Wathan, creator of Tailwind CSS, reflected:

From “AI slop” to rocking the industryOne widely circulated “a-ha” moment has been from Andrej Karpathy, a cofounder of OpenAI. Andrej has not been involved in OpenAI for years, and is known to be candid in his assessment and critique of AI tools. Last October, he summarized AI coding tools as overhyped on the Dwarkesh podcast (emphasis mine):

Two months on, that view had been thoroughly revised, with Karpathy writing on 26 December (emphasis mine:)

The creator of Claude Code, Boris Cherny, responded, sharing that all of his committed contributions last month were AI-written:

We previously did a deepdive on how Claude Code was built with Boris and other founding members of the team. 2. Why now?Model releases in November and December seem like the tipping point where AI got really good at generating code:

I’ve used both Opus 4.5 and GPT-5.2 and they seem very competent at coding, which isn’t a niche opinion. Here’s Peter Steinberger, a software engineer with ~20 years’ experience and the creator of PSPDFkit, gave his impression of GPT-5.2:

Simon Willison is an independent software engineering expert in LLMs whom I pay attention to, and he pointed out the same:

The first-ever The Pragmatic Engineer Podcast episode was with Simon, fittingly entitled AI tools for software engineers, but without the hype – and Simon continues to live up to that sentiment. Wild prediction coming true?One forecast which many – including me – were sceptical about was made by Anthropic’s CEO, Dario Amodei, last March, when he said:

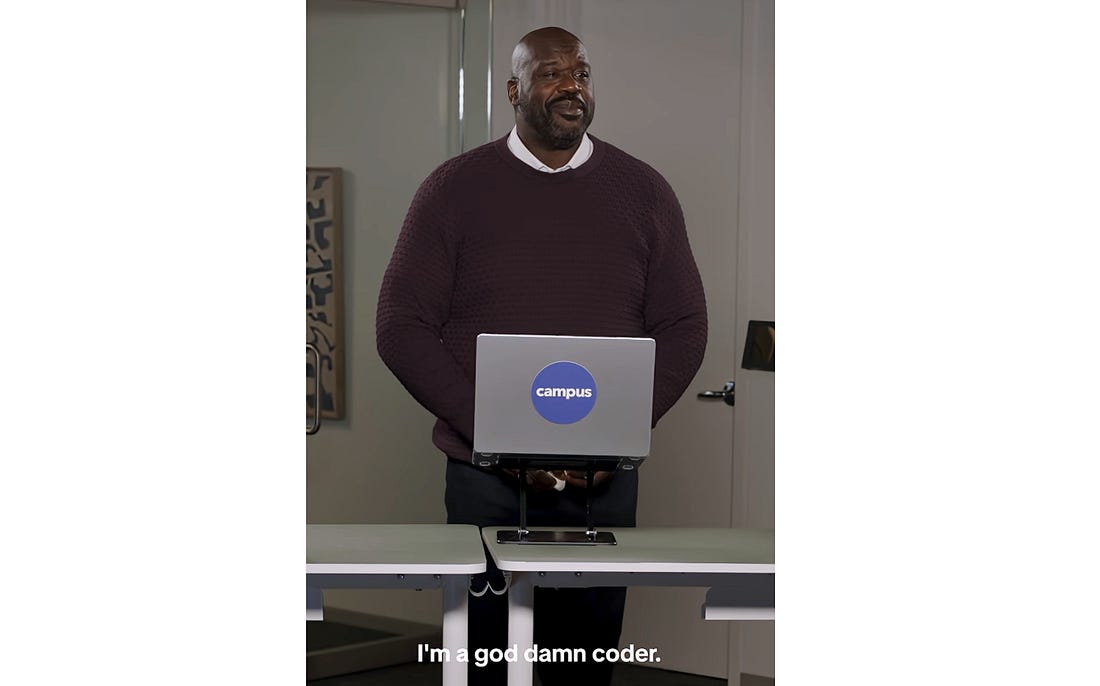

Yet it came to pass in December, when 100% of Cherny’s code contributed to Claude Code was AI-written. Playing devil’s advocate, one could point out that Claude Code is closed source, so the claim is hard to validate. And of course, the creator of something like Claude Code wants to showcase its high-performance capabilities. But I’ve talked with Boris and trust him; also, my own experience of using Claude Code tallies with his: I let Claude Code generate all the code I end up committing. When the code is not how I want it, I do more prompting to get the LLM to fix it. Like Boris, I’ve ceased writing code by hand because I don’t have to do it, and the models seem capable enough. I can still do it, but it’s simply faster to leave it to the model. Even for code that I know well enough and can navigate in my IDE, I’ve noticed that prompting the agent can get edits done just as quickly as I would do it, if not faster. Nonetheless, I do find myself going to the IDE as I like to know that I can do the work. For my own use case, the claim feels true enough that AI can generate 90% of the code I write, using TypeScript, Node/Express, React, and Postgres as technologies, in my case. I understand the same is increasingly true of languages like Go, Rust and other popular languages and frameworks which LLMs have plenty of training data for. Interestingly, LLMs seem to be pretty good at C as well, as observed by Redis creator, Salvatore Sanfilippo. The remainder of this article assumes AI coding tools WILL become good enough to generate ~90%+ of the code for many devs and teams, this year. The most likely candidates for this landmark look like being startups seeking product-market fits – for whom throwaway work is no big deal – and also greenfield development projects, where no existing codebase needs to be ingested or understood before building something new. Of course, there will also be plenty of occasions when it won’t make sense to totally rely on AI coding tools, either due to them not working well enough in some codebases or contexts, or when devs purposefully choose not to lean on them. It’s worth exploring the directions in which the software engineering profession could go in environments where almost all code is generated by AI via prompts, rather than being typed out by a developer. This would be a sea change that impacts the profession, but how? Let’s run the rule over the good, bad, and the ugly possibilities, starting with potential negatives. 3. The bad: declining value of expertiseSome things that used to be valuable will be mostly delegated to the AI: Prototyping. Platforms like Lovable and Replit explicitly advertise themselves as ways for nontechnical folks to build software. In a recent ad, Replit teamed up with legendary NBA star Shaquille O’Neal, who vibe-coded an app for getting the top 5,000 pickup lines in online history, among others:

Still, with AI tools, product folks, designers, and business people can build their own prototypes, and no longer need a dev to make an idea real. Alongside this, it’ll become a baseline expectation for each and every developer to be able to generate concept apps fast. Being a language polyglot will probably be less valuable. Engineers who are experts in multiple languages have traditionally been coveted because engineering teams often like to hire experts in their own stack. So, a team using Go would often prefer to hire senior engineers with Go knowledge, and the same with Rust, Typescript, etc. I’d qualify this with the fact that some forward-thinking teams ignore candidates’ expertise in their specific language and assume that competent engineers can pick up languages on the go. But with AI writing most of the code, the advantage of knowing several languages will become less important when any engineer can jump into any codebase and ask the AI to implement a feature – which it will probably take a decent stab at. Even better, you can ask AI to explain parts of the codebase and quickly pick up a language much faster than without AI tools. The end of language specializations and frontend/backend specializations? In the early 2000s, I recall most job adverts wanted candidates for a specific language: ASP.NET developer, Java developer, PHP developer, and so on. From the mid-2010s, more companies started to hire based on specialization in the stack: backend developer, frontend developer, native iOS/Android developer, cross-platform mobile developer. On the backend, it became accepted that a dev who knew a language like Go pretty well could pick up Typescript, Scala, Rust or other languages as necessary. Roles where for specific languages still matter were for native mobile, where the iOS and Android frameworks were different enough to require deep expertise in one or the other. But today with AI, a backend engineer can prompt decent frontend code, cross-platform code, or even attempt native mobile code. With this tool, I struggle to foresee startups hiring separate frontend and backend devs: they’ll just hire a specialist whom they trust will use AI to unblock themself across the stack. Implementing a well-defined ticket is something AI will increasingly do, like taking a JIRA or Linear ticket that’s well-defined – like a bug report or small feature request – and implementing it. Even today, the team at Cursor has an automation where all Linear tickets are automatically passed to Cursor, which one-shots an implementation. A dev can then choose to merge it or iterate on it. The more context is passed and the better the models are, the more likely the output will be mergeable. This will be a major shift, especially in hierarchical workplaces where project or product managers have long been writing detailed tickets for devs to implement with no questions asked! Refactoring will probably be ever more delegated to AI. It’s already pretty good at refactoring and the tools will probably only get better. Refactoring by hand will be much slower than spelling out what type of refactor you want the AI to do. Of course, in the pre-AI era, modern IDEs also offered powerful refactoring capabilities for speeding up tedious tasks like renaming functions or classes that did the renaming across the entire codebase, extracting functions. Of course, there will always be the risk that AI messes things up, especially with large refactorings. This is why having ways to validate code will surely be even more important this year. Paying careful attention to generated code – in some cases? Generating code from AI prompts can lead to verbose code, or duplication of existing code instead of using an abstraction. But there are times when this is perfectly acceptable, such as when building proof of concepts, or when topics like program efficiency are unimportant. Software engineer Peter Steinberger is building greenfield software, and said he’s stopped reading code generated by AI:

Even so, reading code will remain important when extending existing mature software, or when security issues need to be avoided. In general, if shipped code doesn’t work and hurts the business, then you’ll want to both test and review it for correctness. 4. The good: software engineers more valuable than before...Subscribe to The Pragmatic Engineer to unlock the rest.Become a paying subscriber of The Pragmatic Engineer to get access to this post and other subscriber-only content. A subscription gets you:

|

Comments

Post a Comment