How will AI change operating systems? Part 1: Ubuntu and Linux

👋 Hi, this is Gergely with a subscriber-only issue of the Pragmatic Engineer Newsletter. In every issue, I cover challenges at Big Tech and startups through the lens of engineering managers and senior engineers. If you’ve been forwarded this email, you can subscribe here. How will AI change operating systems? Part 1: Ubuntu and LinuxA deepdive with the Canonical team into how AI is changing Ubuntu, why they’re betting on local-first LLMs, and a look into other Linux distributions

AI is affecting how many of us software engineers build; we’re prompting more code and producing much more of it. The tools are also adapting, with command-line interfaces gradually becoming more popular than IDEs. But what about operating systems? To find out, I reached out to the leading Linux distribution – the team at Ubuntu – and the Windows team, about how AI is changing their operating systems. Today’s article focuses on Linux and Ubuntu, and we’ll cover Windows in a follow-up issue. Obviously, I reached out to Apple but heard nothing back, unsurprisingly. If you’re reading this and happen to work at Apple, it’d be great to learn more! Jon Seager is VP of Engineering at Canonical – the company behind Ubuntu – and has provided new details about what the team there has built for AI support, and some new ideas that they’re brewing up. Today, we cover:

The bottom of this article could be cut off in some email clients. Read the full article uninterrupted, online. 1. Hardware enablement: support for GPUs, NPUs & DPUsJon mentioned he detects a “Dotcom Boom”-era vibe in the industry, like around when “web 1.0” was created, and indeed, lots of startups today aim to be the Google-style success story of this “AI era”. At Canonical, the team asked: what does that mean for Ubuntu as an operating system? For instance, should Ubuntu join the competition and try to position itself closer to AI, or keep focusing on what they’ve done for decades: build an operating system? Jon said:

Hardware enablement means that if a computer (typically, a laptop) has AI-related hardware, Ubuntu should allow it to make full use of it. This involves adding support for GPUs, NPUs, DPUs and other types of accelerator cards. Let’s briefly go through each. GPUsAs is likely widely known by readers, ‘GPU’ stands for Graphics Processing Unit. Originally built for graphics rendering, its #1 use case is no longer in video games but for AI training and inference. GPUs come in two forms:

NVIDIA leads the market in discrete GPUs for rigs with its Blackwell family, and in standalone GPU cards with the NVIDIA RTX series. Other vendors like AMD offer GPUs for data centers (like the Instinct MI300 Series) and for PCs with the AMD Radeon series.

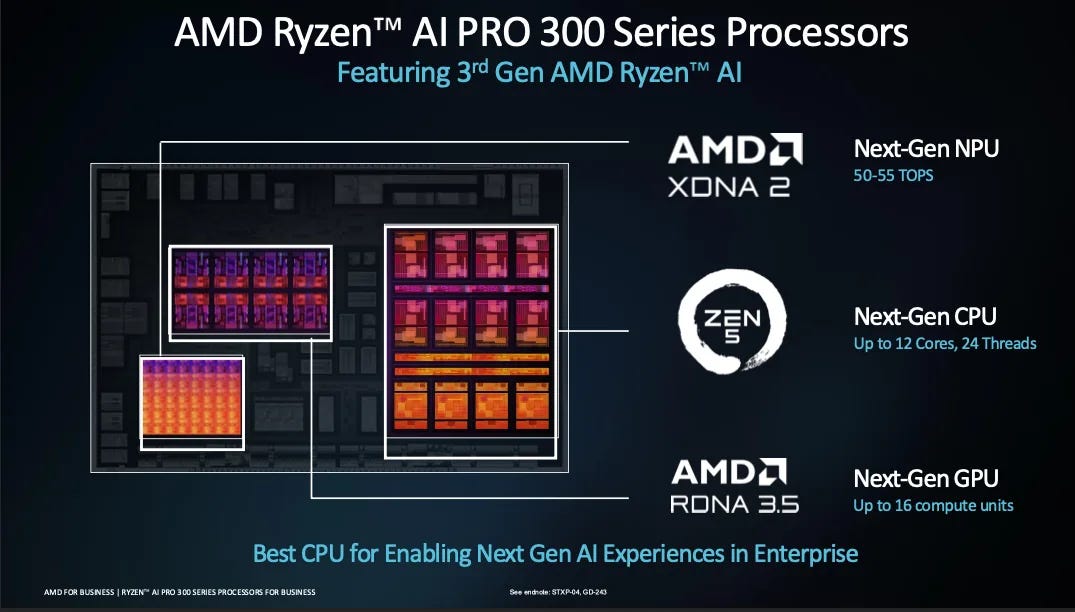

NPUsNeural Processing Units (NPUs) are also called “AI accelerators.” This is a dedicated block on the System-on-a-chip (SoC), on modern processors especially designed for running AI inference efficiently on‑device. Since 2022, many modern processors have had a dedicated NPU block, including all Apple’s M-series chips (from M1 and up), Intel’s Core Ultra and Core Ultra “Series 2”, AMD’s Ryzen AI 300 series, and also Qualcomm’s Snapdragon X Elite and Snapdragon X Plus. A number shared for each NPU is TOPS. TOPS means Tera (trillions) of Operations Per Second, and the said operation is a “multiply-accumulate” (MAC) one, which Qualcomm describes as:

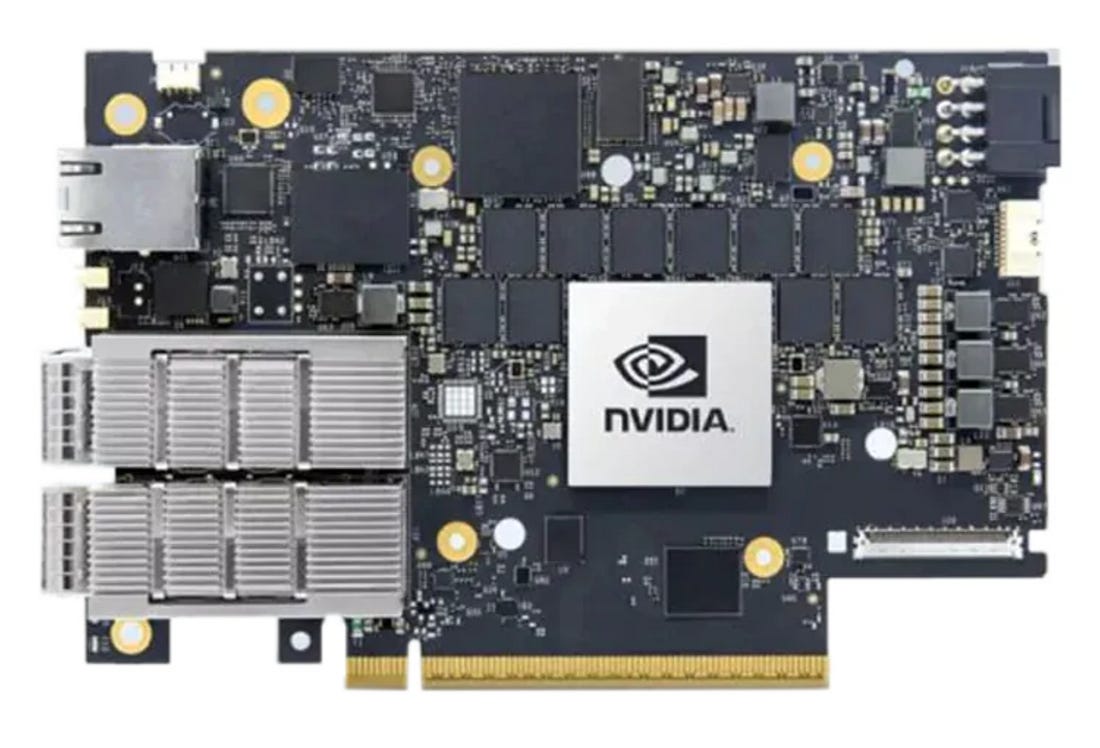

How TOPS is calculated: TOPS = 2 × MAC unit count × Frequency / 1 trillion. “Frequency” refers to the clock speed (cycles per second) at which an NPU and its MAC units (as well as a CPU or GPU) operate, which directly influences overall performance. Processors at higher frequencies allow for more operations, but higher frequencies also mean more energy consumed, heat generated, and battery life decreased. The TOPS number that’s quoted for processors is generally the peak operating frequency. NPUs are often ideal for low-power, local inference, and for running smaller, local models. They can be useful for things like Local speech‑to‑text (dictation, captions, meeting transcription), video background blur/replacement or auto‑framing, small local language summarization, etc. NPUs are more typical of laptop and PC processors, although some phone processors ship with them like the iPhone (A-series chips) and Google’s Tensor processor in Pixel phones. Basically, NPUs promise to bring efficiently-running local models on laptops one step closer. DPUsData Processing Units (DPUs) are typically found in data centers, moving massive amounts of data fast. NVIDIA’s explanation:

Several major chipmakers manufacture DPUs, of which NVIDIA’s BlueField family is the most widespread. Others include AMD Pensando DPUs (Elba, Giglio), and Intel IPU / DPU cards (E2100, E2200 series). DPUs are most commonly deployed inside Hyperscale cloud providers (AWS, Azure, GCP, OCI), or in AI and high-performance computing (HPC) data centers, or larger private clouds. DPUs make sense when GPU traffic is huge, or when the network telemetry overhead is so great that it could overwhelm the CPUs processing the data transfer. 2. Hardware partnershipsIt’s easiest to add support to hardware by working with leading chip manufacturers, so Ubuntu has relationships with hardware vendors for that reason. As a result, the OS sometimes offers day-one support for cutting-edge AI supercomputers. Partnership with NVIDIAIn September 2025, Canonical announced it would package and distribute the full NVIDIA CUDA toolkit directly within Ubuntu’s repositories. This deal collapsed into a single standard apt install, something that had previously been a multi-step manual installation process of downloading from NVIDIA’s site, importing GPG keys, pinning a separate APT repo – and praying nothing broke. Packaging and distributing the CUDA toolkit makes developing with CUDA easier. From Jon:

Ubuntu’s strategy of working directly with chipmakers seems to be working. NVIDIA recently discontinued its custom NVIDIA DGX OS — a modified Ubuntu it maintained for years — and now ships plain Ubuntu. Jon:

At CES 2026 in January, Canonical announced Ubuntu support for the NVIDIA Vera Rubin NVL72 rack-scale architecture, with day-one platform readiness in Ubuntu, version 26.04 LTS (Long-Term Support: at least 15 years for enterprise customers). AMD and IntelIt’s clear Ubuntu and NVIDIA enjoy a strong partnership, but Canonical aims to remain neutral, Jon says:

Last December, Ubuntu announced native support for AMD ROCm, and also ships with Intel’s OpenVINO toolkit. Ubuntu 26.04 LTS will be the first major distribution to natively package all three GPU compute stacks — NVIDIA, AMD, and Intel — with long-term enterprise support. Under Ubuntu Pro, ROCm LTS releases receive up to 15 years of security maintenance. Security maintenance means that if vulnerabilities or critical incompatibilities are discovered in an LTS version, Canonical will patch them even if the upstream vendor no longer supports those versions and no longer backports security patches. AMD Instinct accelerators are gaining traction in HPCs and sovereign AI deployments, as enterprises look for alternatives to CUDA-locked hardware. AMD’s SVP and Chief Software Officer, Andrej Zdravkovic, said the partnership would make it “easier for developers and enterprises to deploy AMD solutions on supported systems.” Chip vendors want to collaborate because it means less work for them to add operating system-level support. Jon:

3. Architecture variantsModern x86 processors support multiple instruction set generations: x86_64 v1, v2, v3, v4, and v5. ARM has a similar hierarchy. Each generation adds capabilities, such as AVX-512 instructions that accelerate machine learning workloads. Let’s take the x86_64 instruction set. The instruction set is versioned. These are the versions:

Until recently, Ubuntu ran slower on newer CPUs in order to keep supporting older ones. So, when installing Ubuntu compiled for AMD64, the OS supported architecture variants for AMD64 v1. Supporting v1 has the advantage that the oldest of AMD64 processors can run this Ubuntu version. But if Ubuntu decided to support v2 instructions, then v1 processors could not run the OS! The OS did not use the new instructions; for example, a modern processor with hardware accelerators like AVX-512, didn’t use them. Canonical has reworked its build infrastructure to produce binaries with specific architecture variant support. So, in the case of running an x86_64 v3 compatible processor, you can download an Ubuntu OS variant that’s compiled specifically for x86_64 v3. One tradeoff the Ubuntu team had to make was building binaries several times, which takes up more processing time and storage at their end. Then again, the Ubuntu team doing this once means that users don’t need to do recompilation, which made it an easy tradeoff, Jon told me. Now, Ubuntu supports x86_64 v3 as an architecture variant and plans to do more. Jon says:

Adding support for architecture variants was a significant undertaking. Jon explains:

4. Betting on local-first & plans for agentic workflowsIf you’ve tried to run an LLM locally on your machine, you’ll know it comes with friction. Jon:... Subscribe to The Pragmatic Engineer to unlock the rest.Become a paying subscriber of The Pragmatic Engineer to get access to this post and other subscriber-only content. A subscription gets you:

|

Comments

Post a Comment